Trust Busters

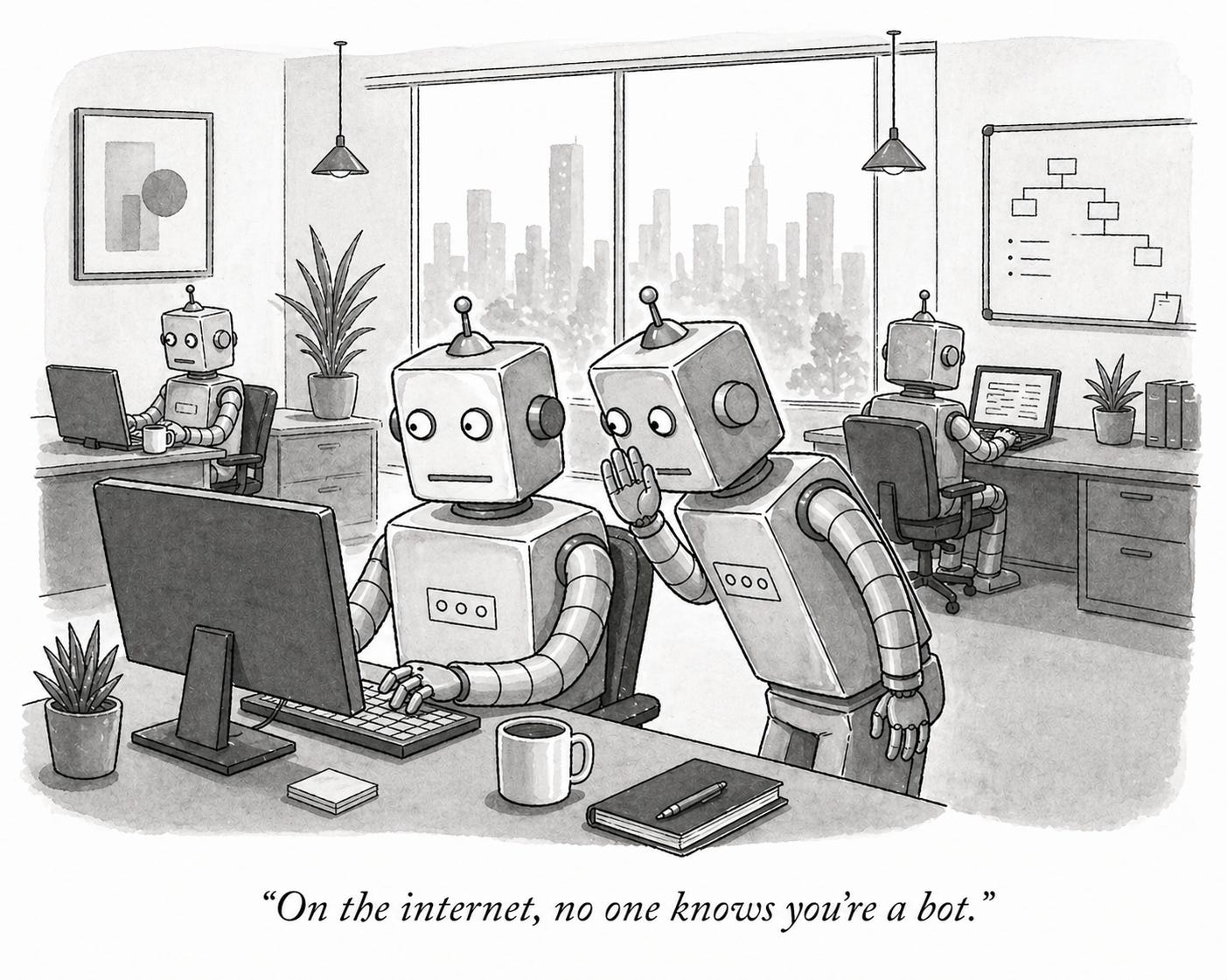

On the internet, no one knows you’re a bot.

Well, technically that’s not true; I think you can still figure that out. But that will get increasingly difficult, and in any case, may not matter: Half the traffic on the internet is now machine-generated, and in an age of agentic AI, it’s hard to imagine an online world that isn’t dominated by AI systems, mostly talking to each other.

The question is, how will they know who they can trust? And more precisely, what are the signals that will signify trust to a machine, as opposed to a human, and who’s building that infrastructure now? (I’m sure someone is; please tell me who, because I’d love to know more.)

All of which matters greatly to anyone in the news and public interest information space: We derive our value from and stake our relevance on being trusted sources.

Right now, we humans have imperfect, but more or less working, ways to assess the trustworthiness of information: We look for brand names, look to who wrote it, or who has endorsed it, or who has engaged with it; sometimes we’ll see if the site looks legitimate, or if the URL seems OK. Most of which are human-legible signals, because (doh) humans are the ones trying to make those decisions. Sure, there’s a ton of gaming of that system — everything from Russian troll armies to clickbait news sites to unscrupulous marketeers — but the playing field is built around human cognition.

And the machines that help us find that information were, in many ways, created in our image — to see and understand the internet through our eyes. The Google search algorithm looks to see how often a site is quoted by other sites, gives more weight to certain trusted sites, and so on. In other words, it tries to act like a human would, if a human had the tirelessness and attention span of a machine.

That’s great for now, and I assume that Gen AI systems that are hunting for information are doing much the same thing, although delivering summaries rather than links, even if some have citations. (Whether anyone actually checks the citations — whether anyone checks them on Wikipedia — is another issue.) That puts end users one more step removed from being able to verify the information themselves, but if you trust their search process, and the accuracy of their summarization, then you can be reasonably confident what it tells you is, if not the truth, at least the consensus.

But what happens when humans stop using the web? If we move into a world — as many believe we will — where we deputize our agents to go forth and find stuff for us, often negotiating with other agents that have access to that stuff, then how do we know — more importantly, how do our agents know — that the bot they’re negotiating with has the right stuff?

What are the signals it will look for? The human-legible factors I listed above won’t be relevant anymore, if sites optimize for machines to crawl them — as they should — rather than people.

It’s tempting to suggest that people — bots — will simply default to big brand names, and certainly there’s an argument that brand and reputation will increase in value in this universe; a bot will uprank information coming from the New York Times vs something from madeupnews.com (which, by the way, seems to be a real site.) And that’s perhaps one solution.

But it’s a solution that brings us back to an era of gatekeepers and big-name media, or at least media with big marketing budgets. One of the great beauties — and drawbacks — of the internet is that it has allowed millions of voices to proliferate, and for us to be able to tap into all kinds of expertise. I’m not sure a world where bots only trust larger institutions is good for us, or for our information commons.

Perhaps one solution is a broadly accepted system to verify the identity of bots, as Gary Liu at Terminal 3 is trying to build. That way, even if the bot you’re dealing with doesn’t have the most accurate information in the world, at least you’ll know who you’re dealing with, and you can properly discount it. It’s not perfect — a verified agent can still lie to your face; but at least it’s lying to your face.

Then there’s what I suggested in my last post, which is to build systems that can deconstruct claims and examine where they have gaps in reasoning, what assumptions they depend on, what facts they assert are true, and what other perspectives there might be on the same issue. Which takes us one step closer to assessing the strength of an analysis, but critically still depends on the system being able to find and verify facts.

Sannuta Raghu’s work on “news atoms” might come into play here, as well — an ambitious plan to try to embed, at the sentence level, some level of sourcing and provenance so that LLMs can build that knowledge into the summaries they create.

These are all interesting avenues to pursue, even if no single one seems like a magic bullet. But the broader point is that we’re expending a fair amount of energy thinking about the trust problem in an AI world based on what the internet looks like now. And I suspect the internet looks very different, very soon.

I don’t have an answer to that. I hope someone does.

Good column. Here (from you) is what my crowd is doing.....".If we move into a world — as many believe we will — where we deputize our agents to go forth and find stuff for us, often negotiating with other agents that have access to that stuff, then how do we know — more importantly, how do our agents know — that the bot they’re negotiating with has the right stuff?"